Title: ACE: Sending Burstiness Control for High-Quality Real-time Communication

Author: Xiangjie Huang, Jiayang Xu (Hong Kong University of Science and Technology); Haiping Wang, Hebin Yu (ByteDance); Sandesh Dhawaskar Sathyanarayana, Shu Shi (Bytedance); Zili Meng (Hong Kong University of Science and Technology)

Scribe: Letian Zhu (Xiamen University)

Introduction

Modern real-time communication (RTC) demands ultra-low latency and consistently high visual quality. However, as content becomes more dynamic and network RTTs shrink to sub-30ms, a critical bottleneck emerges: long-tail queuing latency in the sender’s pacing queue between encoder and network. This problem stems from the fundamental mismatch between bursty frame streams produced by video encoders and the smooth traffic patterns expected by networks. Existing solutions fail to address this issue effectively - they either force an undesirable trade-off between latency and video quality through aggressive bitrate smoothing, or rely on static pacing mechanisms that cannot adapt to varying network conditions and frame characteristics.

Key idea and contribution

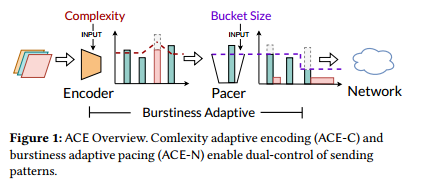

The authors propose ACE, a dual-control approach that manages both encoding and transmission burstiness through two complementary mechanisms. First, ACE-N (burstiness adaptive pacing) dynamically adjusts the bucket size of a token-based pacer at frame-level granularity, allowing controlled bursts when network conditions permit while preventing buffer overflow. It estimates network buffer occupancy using fine-grained packet arrival patterns and adapts the pacing strategy accordingly. Second, ACE-C (complexity adaptive encoding) introduces an adaptive complexity mechanism that smoothens frame sizes without sacrificing quality by leveraging the complexity-size tradeoff in modern video codecs. ACE-C selectively increases encoding complexity for oversized frames (typically less than 5% of frames), trading computational overhead for reduced transmission delays. The system operates orthogonally to existing congestion control algorithms, focusing on sub-RTT sending patterns rather than bandwidth estimation.

Evaluation

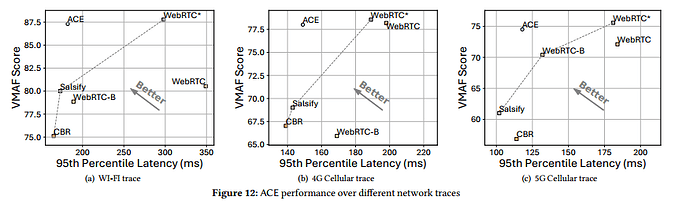

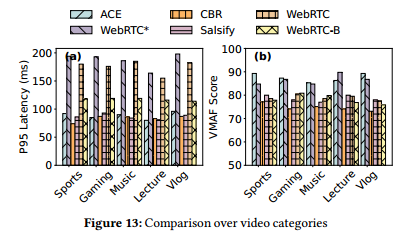

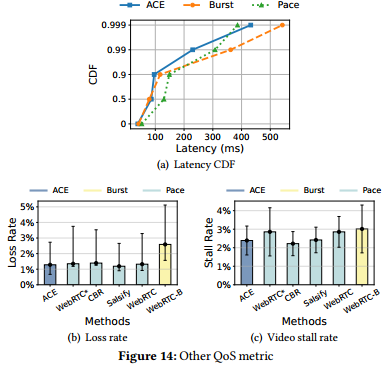

The authors implemented ACE on WebRTC and libx264 encoder, evaluating it through trace-driven emulation and real-world experiments across diverse network conditions (WiFi, 4G, 5G). ACE reduces end-to-end 95th percentile latency by up to 43% compared to state-of-the-art WebRTC while maintaining superior VMAF scores equivalent to the highest-quality baselines. The system achieves particularly strong performance on high-motion content (70% latency reduction for gaming videos) while providing modest improvements for low-motion content. This result is significant because it demonstrates that careful management of sending patterns can dramatically improve RTC performance without requiring fundamental changes to existing video codecs or congestion control mechanisms, making it practically deployable in current systems.

Question

Q1: Why does this solution only work for RTC? For VOD, we also have bursts, for example, when a startup or app store activity happens. Does granularity matter since in VOD cases it’s probably in hours?

A1: That’s a good question, but for me it’s like a future question because I’m firstly working on RTC and I solve this problem, but I didn’t actually consider how this work can also be used in VOD and video streaming. So I guess the answer is yes.

Q2: I’m confused because pacing is a form of congestion control. All these RTC solutions run on top of Google congestion control, so they should already shape the traffic. If you remove congestion control, there will be bursts.

A2: I’m not actually removing any congestion control. The congestion control is there, and it’s actually GCC (Google Congestion Control), which determines the target rate. The frames produced by the video encoder will have data fluctuating around that bitrate, and I just control whether those tiny bursts can be sent out or not. On the large time scale, the rate is still the rate determined by the congestion control.

Q3: In classical congestion control, pacing can be detrimental to throughput. I’m wondering how fair you are to the rest of the traffic. You allow these bursts to go through, but don’t consider the impact on other traffic. These bursts might create queuing and make latency performance worse for other flows.

A3: We know that, and actually, it’s like a tiny part of the congestion control we are controlling. That’s why we also evaluate fairness issues to demonstrate that we don’t influence other streams.

Personal thoughts

ACE represents a thoughtful approach to a real problem in modern RTC systems. The dual-control mechanism is elegant - addressing both the source of burstiness (encoding) and its impact (transmission). The orthogonal design for congestion control is particularly smart, allowing deployment without disrupting existing infrastructure. However, the complexity of adaptive encoding may have limited applicability beyond scenarios with significant content variation, and the power consumption implications for mobile devices warrant further investigation. The work opens interesting questions about dynamic resource allocation in multimedia systems and the potential for ML-driven optimization of these trade-offs.