Title: ACM Community Session

Hosts: Matthew Caesar (University of Illinois Urbana–Champaign), Christophe Diot (Google)

Scribes: Mengrui Zhang (Xiamen University), Yunxiao Ma (Inner Mongolia University)

The ACM SIGCOMM community meeting began with an overview of the event’s agenda: first addressing SIG business for thirty minutes, followed by a panel discussion on reviewing practices. The emphasis was on fostering community participation, encouraging attendees to share their input directly.

Diversity and Inclusion: The session highlighted the importance of diversity and inclusion as core values of the SIG, while noting current challenges due to reduced funding and shifting corporate priorities. Despite resource constraints, the SIG continues to support impactful initiatives such as N2Women, TMA PhD School, and regional conferences like COMSNETs and APNet. The ambassador program, which connects passionate individuals with community needs, has shown success, particularly in Southeast Asia and China. Future focus areas include extending geo-diversity efforts to Africa and supporting women in computing. The SIG also announced an open call for a new Director of Diversity and Inclusion, emphasizing this as a critical moment for leadership and vision.

Southeast Asia Pods: A representative from Thailand described the SIGCOMM Southeast Asia Pods initiative, which streams SIGCOMM conference content to local hubs across countries like Thailand, Vietnam, Malaysia, and the Philippines. These pods provide exposure to SIGCOMM-level research and enable discussions and Q&A sessions with paper authors. Attendance numbers have grown steadily, and the initiative has been supported with travel grants for students.

Financial/Budget: The financial update reported that the SIG is in strong health, with nearly $3 million in reserves. Revenue comes mainly from conferences and membership, though membership has declined from over 2,000 to around 800. The leadership stressed their intent to spend more proactively, particularly on diversity initiatives, travel grants, and regional support, while maintaining financial stability. Upcoming challenges include adapting to ACM’s new open-access policy, which imposes an article processing charge (APC). SIGCOMM plans to treat APCs as grants, assisting authors from less wealthy institutions who cannot afford the fees.

Education: Updates were given on three new initiatives: the SIGCOMM paper reading group, the scribe program, and a shadow PC. The paper reading group has gained wide participation, especially in Asia, by encouraging non-authors to present papers and stimulating interactive discussions. Recordings are available online, making it a valuable resource for the community. These initiatives aim to strengthen learning, inclusivity, and engagement among students and early researchers.

New Award: A new award, the ACM SIGCOMM Distinguished Community Service Award, was announced. Unlike existing research-focused awards, this one recognizes outstanding service contributions to the community, highlighting the often-unseen work of volunteers and leaders. Nominations will be sought annually, with recipients honored at SIGCOMM alongside traditional research awards.

SIGCOMM 2026 Location: Eric Keller announced that SIGCOMM 2026 will be held in Denver, Colorado, with Sangtae Ha as co-chair. The announcement highlighted Denver’s balance of urban amenities, historical character, and natural attractions, from a vibrant tech scene to nearby Rocky Mountain landmarks, making it an appealing destination for attendees.

CoNEXT 2025 & 2026: Finally, announcements were made regarding upcoming CoNEXT conferences. CoNEXT 2025 will take place in Hong Kong, promising a strong program with record submissions. Looking further ahead, CoNEXT 2026 will be hosted in Utrecht, the Netherlands, led by general chairs from the University of Twente. These events continue to strengthen the international presence and participation of the SIGCOMM community.

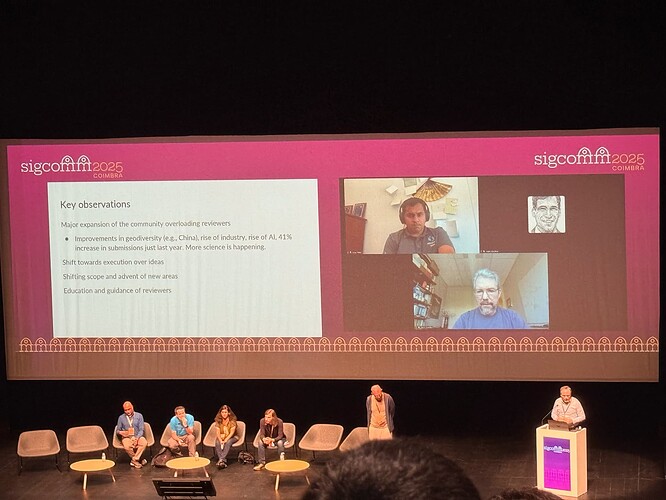

Panel: Community Discussion: Reviewing Practices

Panelists: Christophe Diot (Google), Sujata Banerjee (Microsoft), Brighten Godfrey (University of Illinois), Srikanth Kandulas (AWS), George Porter (UC San Diego), Vyas Sekar (CMU), Geoff Voelker (UC San Diego), and Keith Winstein (Stanford University).

Introduction This panel is a community discussion focused on improving reviewing practices at SIGCOMM. Building on earlier conference reforms—such as non-paper sessions and dual tracks that were well-received—the community is now turning its attention to the quality and scalability of the review process. The motivation comes from the growing number of paper submissions, the rising pressure on program committees, and concerns about maintaining fairness, innovation, and efficiency. The session brought together panelists including current and former PC chairs, members of the technical steering committee, and the Director of Change, to share insights, experiences, and ideas for possible reforms.

Questions and opinions:

George Porter: Emphasized that experimenting with new mechanisms (e.g., shorts, highlighting thought-changing papers) helped increase acceptance, diversity, and size. Evidence shows that trying new approaches can “move the needle.”

Sujata Banerjee: Reflected on challenges since COVID-19, including remote PCs and multitasking pressures. Suggested considering models from other communities, such as multiple deadlines, open reviews, and external reviewer pools, to scale reviewing and ease stress.

Brighten Godfrey: Pointed out the inefficiency of repeated rejections and resubmissions, which delay scientific progress and frustrate authors and reviewers. Advocated for an iterative review model with a large portion of papers given a “revise” option and continuity of reviewers, speeding up acceptance and reducing redundancy.

Srikanth Kandula: Shared anecdotes about reviewers reversing positions or ignoring prior feedback, leading to poor outcomes. Stressed the importance of reviewers finding reasons to say “yes,” standing by positive recommendations, and focusing on innovation rather than only flaws.

Vyas Sekar: Noted that everyone, including experienced researchers, faces rejection. Emphasized the importance of continued experimentation and finding a fair equilibrium. Introduced dual-track reviewing in both NSDI and SIGCOMM as examples of bold changes worth testing.

Keith Winstein: Highlighted his role as Director of Change in facilitating consensus and practical experimentation. Suggested trialing “mini-conferences” within SIGCOMM to test alternative review models while protecting the main conference. Stressed the need for careful but bold experimentation.

Geoff Voelker: Proposed adopting a self-nomination system for reviewers, inspired by the USENIX Security community. This would reduce PC recruitment overhead, expand the reviewer pool, and help SIGCOMM incorporate expertise from new areas.

Q1 :

First, I want to thank the HotNets chairs for showing flexibility and humanity when we requested a deadline extension during a very difficult time in my community. Second, I want to share a story: in one conference, we had a paper rejected. Later, I rewrote the paper without adding new experiments—just reorganized the text. A reviewer then claimed, “Thanks for adding this experiment,” even though it was already there, only moved. This shows factual errors in reviews. My suggestion is that PC chairs consider using AI to check reviews for factual correctness (with authors’ consent), which would greatly help authors.

A1:

That’s an interesting idea. AI could be used to flag common review issues, such as when reviewers claim prior work similarity but don’t cite it. At the same time, authors themselves are in the best position to respond to factual issues. A structured conversation process between authors and reviewers could help clarify misunderstandings and ensure fairness.

Q2 :

I strongly want a dialogue between authors and reviewers, not just one-shot rebuttals. When controversies or questions arise, authors should be able to clarify, negotiate, and suggest alternatives. This would make reviews more constructive.

A2:

In the past, we’ve allowed rebuttals, but they were limited. A smoother process for author–reviewer exchanges could help, but it raises issues such as whether reviewers should reveal their identity. Still, having better rebuttal discussions is worth exploring.

Q3 :

First, has the community considered adopting a VLDB-style rolling review process with monthly revise-and-resubmit deadlines? This provides continuous dialogue and faster iteration. Second, what is SIGCOMM’s stance on posting papers on arXiv before acceptance?

A3.1 :

VLDB-style review is appealing, but arXiv raises cultural challenges. In our field, a polished PDF on arXiv is often treated as authoritative, even before peer review. This can mislead readers and affect fairness. Unlike math or physics, our community is not fully ready for this model.

A3.2:

VLDB’s rolling review is an interesting model and worth experimenting with, though it may not reduce overall burden.

A3.3:

ArXiv introduces trade-offs. It accelerates dissemination but weakens double-blind reviewing and complicates comparisons. Some communities treat arXiv as the timestamp of a paper, protecting authors from unfair scooping. We should discuss carefully before adopting such practices.

A3.4:

Currently, SIGCOMM allows submissions that also appear on arXiv. However, reviewers are instructed not to judge papers based on arXiv preprints or use them as grounds for rejection. Still, issues remain, such as denonymization risks and unclear comparisons with non–peer-reviewed work.

Q4 :

Many authors complain about poor reviews. Could we adopt corporate-style onboarding for reviewers—e.g., mentoring new reviewers, spot-checking reviews, and allowing reviewers to decline assignments without stigma? This would strengthen the reviewer community and encourage better quality.

A4.1 :

Good point. But frustrations exist on both sides: authors often feel mistreated, and reviewers feel overburdened and underappreciated. Reviewers see themselves as protecting readers, while authors may appear transactional (e.g., asking if they can skip comparisons or omit limitations). This cultural tension fuels “grumpy reviewer” behavior. Addressing review quality requires balancing both author and reviewer frustrations.

A4.2:

Reviewer selection is already careful: PCs consist of active contributors with prior authorship experience. Training happens via activities like shadow PCs, though these have limitations. Reviewer quality cannot be solved purely with automation; it requires judgment and commitment from the community.

A4.3 :

Relying on arXiv papers as “prior art” is a slippery slope—sometimes authors are asked to compare against work that isn’t even published yet.

Q5 :

Shadow PCs, while useful to show junior researchers the process, are ineffective at teaching good reviewing. Better methods include onboarding exercises, feedback from senior reviewers, and structured reviewer training (e.g., IMC’s research task force). As the community grows, training new reviewers systematically is crucial.

Q6 :

When running shadow PCs, I carefully reviewed every shadow review, which improved quality significantly—so shadow PCs can work if done properly. But I also worry about submission overload: some authors submit 18–30 papers, which seems unsustainable. We may need limits on submissions and reconsider the acceptance balance between “experience papers” and research papers, as the former currently enjoy higher acceptance rates.