Title: DeepSpace: Super Resolution Powered Efficient and Reliable Satellite Image Data Acquistion

Scribe : Hongyu Du (Xiamen University)

Authors: Chuanhao Sun, Yu Zhang (The University of Edinburgh); Bill Tao, Deepak Vasisht, (University of Illinois Urbana-Champaign); Mahesh K. Marina (The University of Edinburgh)

Introduction

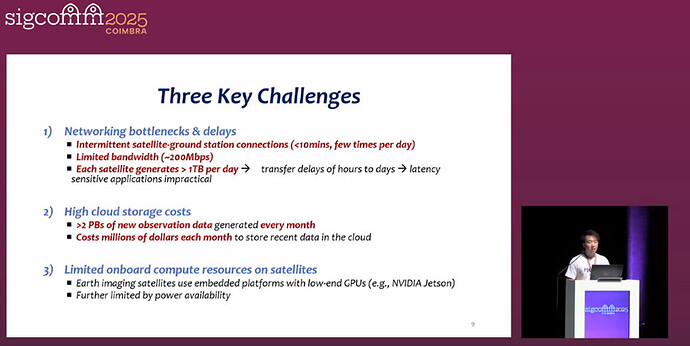

Low Earth Orbit (LEO) satellite constellations can frequently capture high-resolution images of the Earth, supporting numerous geospatial applications such as disaster monitoring, climate change assessment, and agricultural analysis. However, they generate hundreds of terabytes of data every day. Data needs to be transmitted to Earth through limited and intermittent connections with ground stations (each connection lasts less than 10 minutes, with a bandwidth of about 200Mbps and few daily connections), resulting in delays in data downloads ranging from several hours to several days. At the same time, over 2PB of new observational data needs to be stored in the cloud each month. Based on the pricing of mainstream cloud service providers, the monthly storage cost amounts to several million dollars. In addition, the computing resources carried by satellites are limited (such as only being equipped with small Gpus like NVIDIA Jetson), and they need to prioritize power supply for critical tasks, making it difficult to run computationally intensive compression algorithms. However, the existing methods for obtaining satellite images have obvious flaws: Lossless compression (such as “7z”) has an extremely low compression ratio (about 1.03), while lossy compression (such as traditional interpolation, compressive sensing, and autoencoders) has a compression ratio far lower than required. Some autoencoder methods have excessive computational load and cannot run on the satellite end. Moreover, most methods cannot guarantee the quality of the reconstructed image, making it difficult to meet the demands of high compression ratio, efficient computing on the satellite end, and reliable decompression. Therefore, a new end-to-end compression system is urgently needed to solve the above problems.

Key idea and contribution :

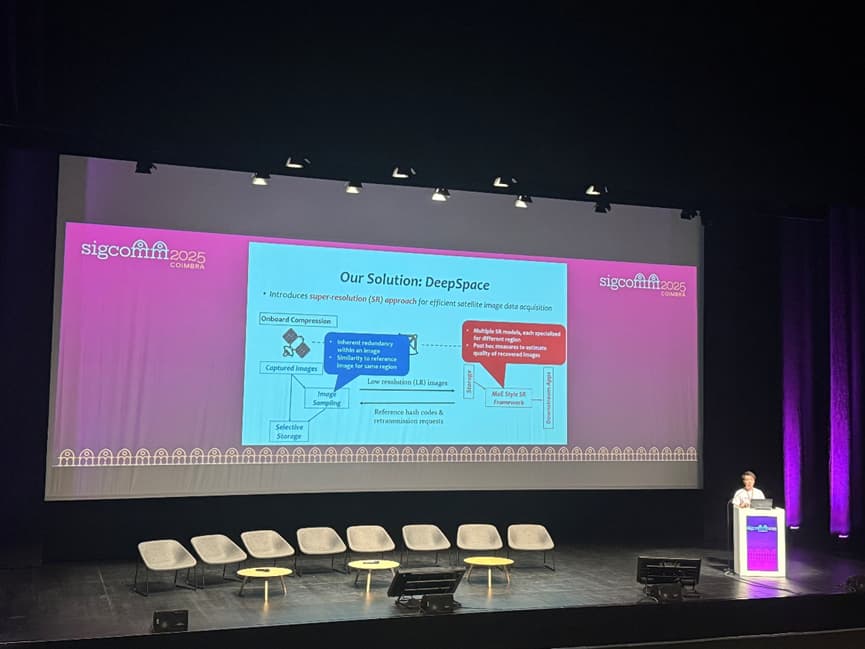

This paper proposes the DeepSpace solution, which is a satellite image data acquisition system based on deep learning, aiming to address the limitations of LEO satellite image transmission, storage and computing resources. This scheme compressions high-resolution images into low-resolution versions through lightweight image sampling at the satellite end. During the compression process, the inherent redundancy of image blocks and the similarity with the reference image are combined to achieve block adaptive adjustment of the compression ratio. Potentially abnormal images with low redundancy and low similarity are temporarily stored at the satellite end for retransmission. In the cloud, on-demand decompression is carried out using a customized Hybrid Expert (MoE) super-resolution framework. This framework contains multiple “expert” models based on wavelet diffusion models and matches the optimal expert model for image blocks of different features through four-level classification (region type, redundancy level, input size, cloud layer level). At the same time, low resolution and hash similarity are combined as posterior indicators to evaluate the reconstruction quality. When the value is lower than the threshold, retransmission is triggered to ensure that the reconstructed image quality meets the requirements of downstream applications. Moreover, this scheme has high computing efficiency at the satellite end and can achieve a compression ratio of more than two orders of magnitude, significantly reducing the bandwidth requirements for satellital-ground transmission and the cost of cloud storage.

Evaluation

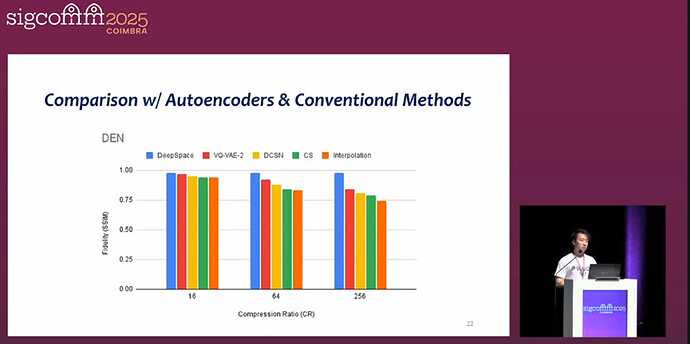

This paper conducts a comprehensive experimental evaluation of DeepSpace. On multiple satellite image datasets such as Planete-CAL, Planete-HK, FarmVibes, DEN-3, and DEN-12, it is compared with the baselines of traditional compression, autoencoders, orbital edge computing, and super-resolution methods in the field of computer vision. The results show that DeepSpace can achieve a compression ratio of two orders of magnitude, which is much higher than that of existing methods, while maintaining high reconstruction fidelity. In terms of computing and storage efficiency, the processing speed at the satellite end is two orders of magnitude faster than that of the OEC method, the uplink occupation is only about 10Kbps, and the storage demand at the satellite end is significantly lower than the baseline. In downstream applications such as wildfire detection, Marine plastic detection, land use and farmland classification, the images reconstructed by over 100 times compression from DeepSpace have performance close to that of the original images, verifying the effectiveness and practicality of the solution.

Q&A

Q : Suppose the satellite only directly descents to the ground station in a very limited background. Can you explain why all alternative architectures such as inter-satellite release have not been considered and how this assumption is achieved?

A : You mean I should consider the interconnection between satellites. At present, especially for the system we were considering when developing this work, the interconnection between satellites was not available at that time. Even for just one second, the connection is not very consistent. It can send all the images to the ground. If the interconnection between satellites is always available, we can further reduce latency.

Personal thoughts

The DeepSpace solution proposed in this paper demonstrates remarkable innovation and practicality both in technical design and practical application. From the perspective of technological breakthroughs, it is the first to combine deep learning super-resolution with lightweight sampling. Through block adaptive compression and a customized MoE super-resolution framework, it has overcome the balance problem among compression ratio, computational efficiency and reconstruction quality in traditional methods, achieving a compression ratio of more than two orders of magnitude while ensuring high-fidelity reconstruction. In terms of application value, the solution not only significantly reduces the transmission delay between satellites and the ground and the cost of cloud storage, but also verifies the usability of reconstructed images in key downstream tasks such as wildfire detection, Marine plastic identification, and farmland classification, providing a feasible path for the efficient utilization of high-resolution satellite images. However, there is still some room for expansion in the solution. For instance, it is possible to explore the combination of multi-source satellite data to further enhance the performance of compression and reconstruction, or to utilize more advanced ground-based models to reduce training costs. Nevertheless, its current design has effectively filled the gap of existing satellite image compression technologies and holds significant reference value for the technological development in the field of geospatial observation.