Title: Edge Caching as Differentiation

Authors

Muhammad Abdullah (EPFL); Mughees Ur Rehman (Virginia Tech); Pavlos Nikolopoulos (EPFL); Katerina Argyraki (EPFL)

Scribe: Rulan Yang (Xiamen University)

Introduction

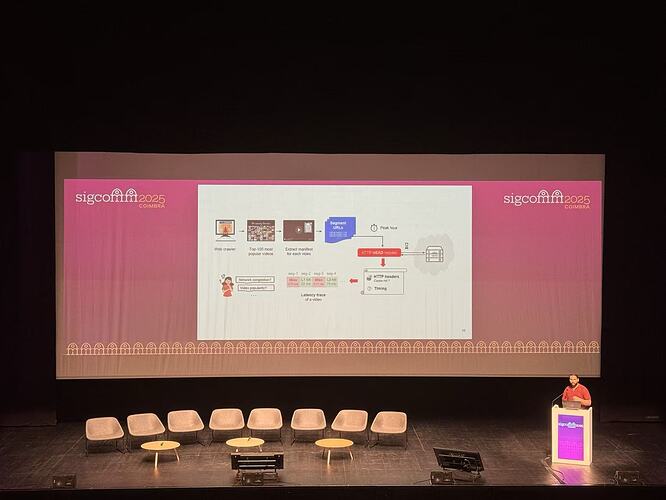

This paper looks at edge caching as a type of differentiation. Normally, differentiation means that ISPs treat traffic from different content providers in different ways, which can change the user’s Quality of Experience (QoE). The authors point out that caching can cause similar effects. If a cache gives one provider a higher hit rate than another, users will see better quality for that provider’s content. Today, cache providers usually charge per byte requested, not by hit rate. This means that popular providers can get better QoE without paying more, while smaller providers may be at a disadvantage. To study this, the authors measure hit rates from five major caching providers at different global locations and compare how they affect video and social media performance. The results show large differences, suggesting that caching can create hidden and important forms of differentiation.

Key idea and contribution

The key idea of this paper is that cache performance across content providers varies significantly. By employing a carefully designed large-scale measurement across major cache providers, this paper demonstrates its significant impact on video QoE but limited impact on social media, explains the disparities through content popularity and shared caching economics, and outlines future directions on caching and content popularity.

Evaluation

This paper reveals large disparities in L1 and L2 cache hit rates across content providers, largely driven by content popularity—popular providers achieve higher hit rates, while less popular ones lag. Although private deals between cache providers and clients cannot be fully ruled out, there is no strong evidence for them. Some providers charge extra for cache misses, penalizing less popular content and giving popular providers a cost advantage. The crawler-based URL selection had minimal impact on L1 hits, with only a potential minor effect on L2 caches. The following summarizes the main findings of the video and browsing experiments.

Video: The video experiments show that different streaming platforms experience significant differences in playback quality under the same cache provider due to variations in L1/L2 hit rates, especially under low bandwidth or high miss-latency conditions, leading to notable differences in adjusted bitrate and startup delay. QoE differences are primarily driven by cache hit rates, with occasional influence from hit latency, while factors such as DNS and processing overhead have minimal impact. Even at the same L1 hit rate, platforms can exhibit several Mbps differences in bitrate due to L2 hit-rate variations or cache routing anomalies (e.g., requests directed to more distant PoPs or DNS misconfigurations). Overall, caching performance disparities directly affect the video viewing experience.

Browser: The browsing experiments show that caching generally has a smaller impact on social-media user experience compared with video. Most first and largest contentful objects are served as L1 or L2 hits, so miss latency has little effect. As a result, time to first contentful paint (FCP) and time to largest contentful paint (LCP) are similar across platforms for most vantage point/cache provider combinations. However, for some specific tuples, significant differences of hundreds of milliseconds still occur, indicating that caching can occasionally cause noticeable browsing delays.

Personal thought

Understanding how caching affects content popularity is a complex but intriguing problem. Existing epidemic analysis provides descriptive insights but lacks predictive power, making it challenging to quantify how QoE-sensitive factors like video startup delay influence content virality. Developing a generative model that integrates user behavior, network effects, and QoE sensitivity could provide a more complete understanding of how caching strategies shape information spread, offering a path toward causal reasoning and better-informed caching design.

Q&A

Q1: Why focus on popularity and not diversity in caching?

A1: Popularity matters because less popular content is less likely to be cached. A service with less popular content but low diversity might still have its content cached more than a very popular service with high diversity. The results shown are averages over the Top 100 videos; using more videos would change results depending on individual popularity.

Q2: Does unpopular content always have a disadvantage in CDNs? Can anything be done?

A2: Yes, unpopular content naturally has less caching advantage. Strategies could include innovations in other areas or caching policies such as threshold-aware caching to help less popular services compete. This is still an open question for the community.

Q3: How is “popularity” measured?

A3: Popularity is measured by the number of daily visits to the service, available from online data. Each video may have different popularity, but the study focuses on the Top 100 most popular videos to consider the best-case scenario.

Q4: Is this research arguing for regulation or net neutrality?

A4: No, the research only presents the data and shows that differences exist. Policy makers and regulators decide whether to act; the researchers are not pushing for any specific regulatory solution.