Title: InfiniteHBD: Building Datacenter-Scale High-Bandwidth Domain for LLM with Optical Circuit Switching Transceivers

Authors: Chenchen Shou (Peking University; StepFun; Lightelligence Pte. Ltd.); Guyue Liu (Peking University); Hao Nie (StepFun); Huaiyu Meng (Lightelligence Pte. Ltd.); Yu Zhou (StepFun); Yimin Jiang (Unaffiliated); Wenqing Lv (Lightelligence Pte. Ltd.); Yelong Xu (Lightelligence Pte. Ltd.); Yuanwei Lu (StepFun); Zhang Chen (Lightelligence Pte. Ltd.); Yanbo Yu (StepFun); Yichen Shen (Lightelligence Pte. Ltd.); Yibo Zhu (StepFun); Daxin Jiang (StepFun)

Introduction

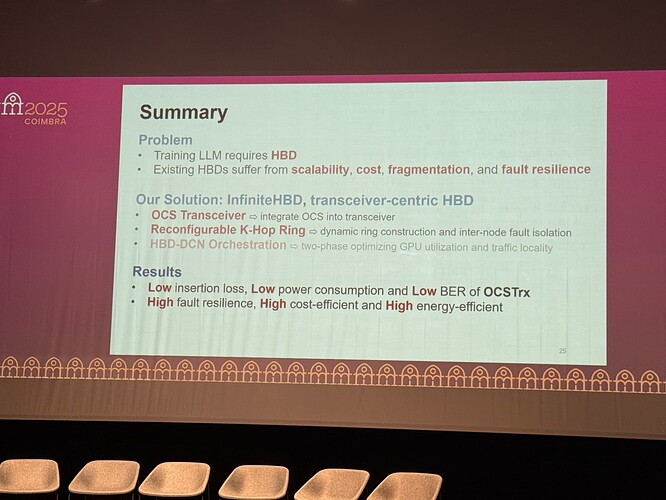

This paper addresses the core dilemma in designing High-Bandwidth Domains (HBDs) for training Large Language Models (LLMs). As model sizes grow, communication-intensive strategies like Tensor Parallelism place extreme demands on the HBD. However, existing architectures have fundamental flaws: “switch-centric” systems like NVIDIA’s NVL-72 offer high performance via costly switch fabrics but suffer from prohibitive scaling costs and resource fragmentation. In contrast, “GPU-centric” systems like Google’s TPUv3/Dojo are more cost-effective but have a large “fault explosion radius,” where a single GPU failure can disrupt the entire network topology. Hybrid architectures (e.g., TPUv4) have not fully resolved this issue. Therefore, designing an HBD that combines datacenter-scale scalability, low cost, and fine-grained fault isolation is a critical challenge for modern AI infrastructure.

Key idea and contribution:

The authors propose a novel “transceiver-centric” architecture called InfiniteHBD, whose core idea is to decentralize dynamic switching capabilities from centralized switches down to each transceiver. They make three key contributions: 1) They designed and implemented a Silicon Photonics (SiPh) based Optical Circuit Switching transceiver (OCSTrx), which features low power, manageable cost, and a rapid 60-80μs switching latency, allowing integration into standard optical modules. 2) Based on the OCSTrx, they proposed a reconfigurable K-Hop Ring topology. This topology uses an intra-node loopback mechanism to flexibly construct rings of arbitrary sizes to match job requirements, while using inter-node backup links to dynamically reroute optical paths around failures, thus shrinking the fault explosion radius to a single node. 3) They introduced an HBD-DCN orchestration algorithm that optimizes the physical placement of TP groups to minimize cross-rack traffic and alleviate congestion in the broader datacenter network.

Evaluation

The paper provides a comprehensive evaluation of InfiniteHBD through both hardware prototyping and large-scale simulation. Hardware tests confirmed the viability of the OCSTrx in key metrics like power, loss, and switching speed. System-level simulations, based on a 348-day real-world fault trace from a production cluster, demonstrated outstanding performance: its interconnect cost is merely 31% of NVL-72’s, and its GPU waste ratio due to faults and fragmentation is over 10x lower than existing solutions, approaching zero. Critically, its dynamic ring-formation capability can boost Model FLOPs Utilization (MFU) by up to 3.37x. This set of results is significant because it proves the architecture successfully breaks the inherent trade-off between cost/scalability and fault resilience in practice, offering a viable path for building next-generation AI clusters that are larger, more efficient, and more robust.

Personal thoughts

I find the paper’s “first-principles” systematic design to be its most commendable aspect. Instead of patching existing systems, the authors returned to the fundamental communication patterns of LLM training and proposed a complete, self-consistent solution spanning hardware, topology, and software. The idea of decentralizing and miniaturizing switching into the transceiver is highly innovative. However, a potential limitation is its deep optimization for ring-based communication (Ring-Allreduce), which leaves weaker native support for All-to-All communication patterns relied upon by strategies like Expert Parallelism (EP). Although a theoretical solution is proposed, its complexity and lack of hardware validation pose a challenge. Future directions worth exploring include how to more efficiently support mixed-parallelism models on this architecture and how to design a scalable and robust control plane capable of managing tens of thousands of dynamic transceivers.