Title: TLadder: QoE-Centric Video Ladder Optimization with Playback Feedback at Billion Scale

Author: Zhuqi Li, He Liu, Shenglan Huang, Baojin Geng, Jack Chen, Jialin Chen, Liyang Sun, Qianyun Ma, Peizhe Liu, Junjun Zhao, Yiting Liao, Jamie Chen, Qian Ma, Qiang Ma, Feng Qian (ByteDance)

Scribe: Letian Zhu (Xiamen University)

Introduction

This paper addresses the critical but underexplored problem of bitrate ladder optimization for adaptive video streaming, rather than developing yet another ABR algorithm. The problem is important because the bitrate ladder directly constrains the decision space available to ABR algorithms - a poorly designed ladder with suboptimal bitrate-quality tradeoffs can restrict the client’s ability to adapt effectively to changing network conditions. Existing approaches fall short because they either employ static ladders not tailored to specific content characteristics (like Apple and Google’s approaches), rely on coarse-grained heuristic-driven rules that make it difficult to achieve optimal QoE, or use heavy-weight solvers with significant scalability issues that cannot handle billions of users.

Key idea and contribution

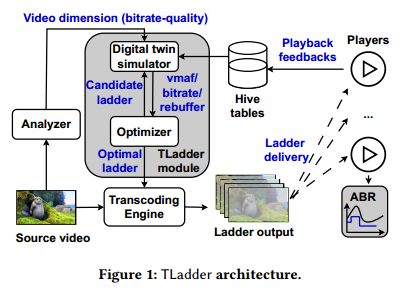

The authors built TLadder, a comprehensive video bitrate ladder optimization system that explicitly maximizes quality-of-experience (QoE) through a principled optimization framework. TLadder’s core innovation lies in its holistic approach that integrates two key dimensions: the video content dimension (bitrate-quality tradeoff of candidate representations) and the playback feedback dimension (network conditions, rebuffering time, and playback bitrate from real production data). The system consists of two main components: (1) an optimizer that uses dynamic programming with polynomial complexity and provable optimality to strategically enumerate candidate ladders, and (2) a high-fidelity digital twin simulator that provides accurate QoE estimation by leveraging massive playback data from their production environment. Unlike previous approaches that suffer from exponential complexity or heuristic limitations, TLadder can scale to millions of videos per day while exploring the full search space to obtain provably optimal solutions.

Evaluation

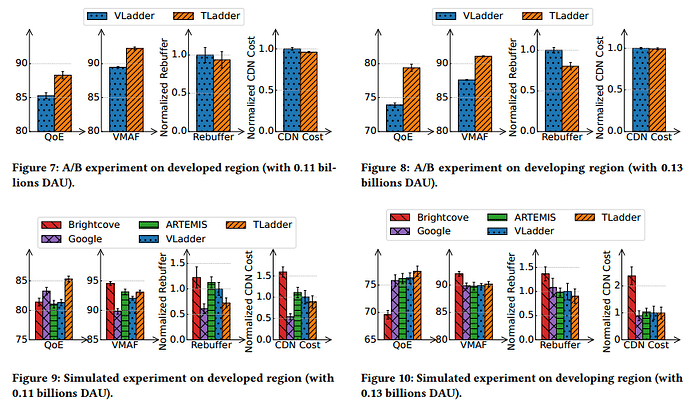

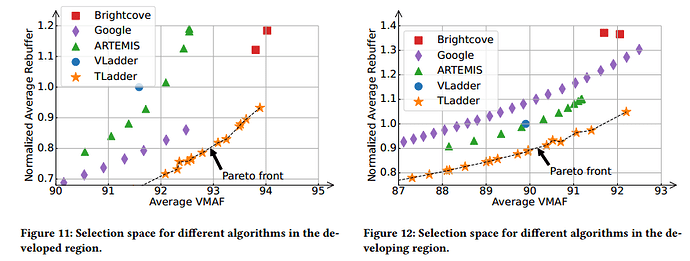

The authors conducted extensive evaluation through large-scale A/B tests across 0.24 billion users and comprehensive trace-driven simulations. TLadder achieved substantial improvements: 6.2-20% reduction in rebuffering time, 2.7-3.5 unit increase in visual quality (VMAF) in an 85-100 unit range, and 1-4% reduction in CDN traffic cost compared to their previous bitrate laddering approach. The system also outperformed existing state-of-the-art approaches, including ARTEMIS, Google Ladder, and Brightcove, across different regions and network conditions. This result is significant because it demonstrates that bitrate ladder optimization can be as impactful as ABR algorithm improvements, while being practically deployable at a billion-user scale with minimal computational overhead (less than 0.2% of total transcoding resources).

Question

Q1: With your TLadder results, is that going to be different ladders per video content, or even different ladders per video content and network conditions, and regions?

A1: We create different ladders on different videos, plus different countries, but we assume the country has its own network throughput distribution.

Q2: Since you have a live decision of transcoding, how much overhead does this add to the transcoder, and will the transcoder become a bottleneck?

A2: What we do for transcoding doesn’t consume many resources. As long as the laddering system doesn’t surpass the resources of transcoding itself, we should be good. In our micro benchmark, it only consumes maybe less than 0.1% of the resources compared with transcoding itself. So the bottleneck is still in transcoding the video itself, and we only add a very marginal cost.

Q3: About the QoE model - it was strange because there are three terms, and the last term you subtracted the bandwidth. The more bandwidth you allocate, the higher the quality of experience should be. Why this approach?

A3: There is a small difference, which is the last term in the QoE definition. We tried the model without that last term before, and it turned out the result was not good. We analyzed the results and found that some users hate spending too much data on video streaming platforms. So if we consume too much data, they will actually stop watching videos or maybe directly quit. Another reason is the data usage cost - it costs money for users and also costs money for the platform because when we stream content to users, we also pay money. So we combine these two together as the last term.

Personal thoughts

This paper represents a significant contribution to video streaming research by shifting focus from ABR algorithms to the equally important but less studied problem of bitrate ladder optimization. The strengths include the principled approach with provable optimality, impressive real-world deployment scale, and comprehensive evaluation methodology combining both A/B tests and simulations. The digital twin simulator concept is particularly clever, allowing the system to adapt to changing production conditions. However, some limitations include the heavy reliance on historical playback data, which may not capture sudden changes in network conditions or user behavior, and the QoE formulation that drops quality switch penalties due to their short-video focus may limit applicability to long-form content. Future research directions could explore joint optimization of ABR and ladder construction (two-way feedback), incorporation of predictive models for network condition changes, and extension to emerging video technologies like VR/AR streaming, where the quality-bitrate relationship may be fundamentally different.