Title : ZENITH: Towards A Formally Verified Highly-Available Control Plane

Authors : Pooria Namyar, Arvin Ghavidel (University of Southern California); Mingyang Zhang (Google); Harsha V. Madhyastha, Srivatsan Ravi, Chao Wang, Ramesh Govindan (University of Southern California)

Introduction

The problem studied is that bugs in modern, large-scale software-defined network (SDN) controllers can lead to state inconsistencies between the high-level intent and the actual state of the data plane. This is an important and interesting problem because these inconsistencies severely reduce network availability and performance, causing outages that can last for tens of minutes and threaten strict “five-nines” availability budgets. Existing systems fall short because they rely on a recovery mechanism called periodic reconciliation (PR), which periodically scans all switches to find and fix inconsistencies. This approach is insufficient as it limits availability while waiting for the next cycle, scales poorly with network size, and frequent reconciliation can itself become a source of high latency.

Key idea and contribution :

The key idea of ZENITH is to proactively prevent inconsistencies by design, rather than relying on recovery mechanisms. The authors built ZENITH, a microservice-based controller that is formally verified to ensure it eventually reaches a state consistent with the network operator’s intent, even under a wide range of component and network failures.

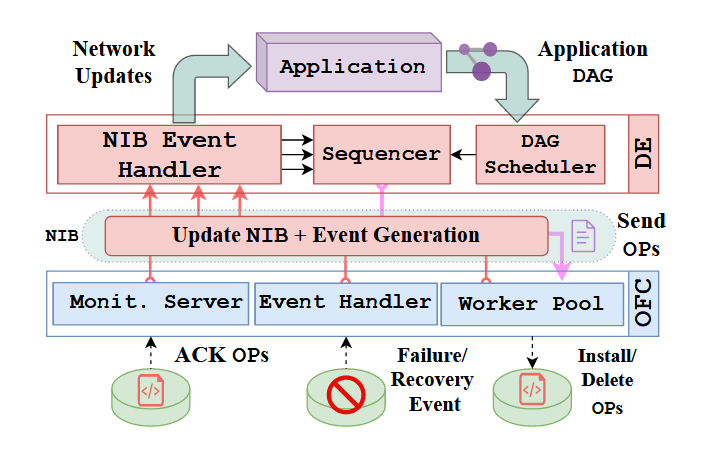

The paper makes three main contributions. First, it presents ZENITH-core, the core controller specified and verified in TLA+. It uses a Directed Acyclic Graph (DAG) to represent application intent and is proven to be correct, making it one of the largest known TLA+ specifications to date. Second, it provides a technique for developers to formally specify and verify their SDN applications (ZENITH-apps) independently of the core, which preserves the agility of the microservice architecture. Third, it introduces NADIR, a tool that automatically generates executable Python code from the verified specifications, minimizing the risk of errors during manual implementation.

Evaluation

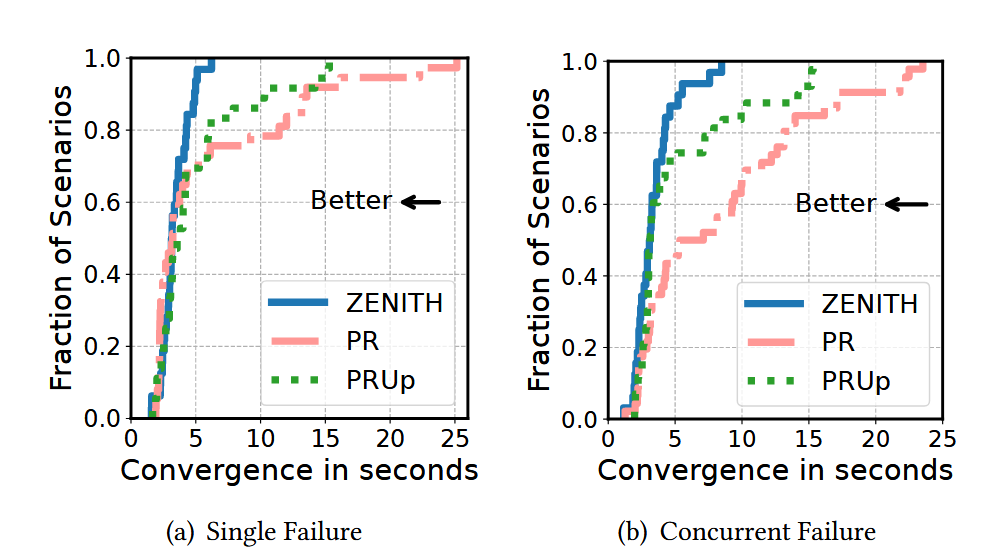

The evaluation demonstrates that ZENITH significantly outperforms traditional PR-based controllers. Experiments were conducted on a large testbed using various topologies, replaying error traces discovered during formal verification and injecting random failures. The results show that ZENITH converges more than 5 times faster than a PR-based controller at the 99th percentile. Furthermore, unlike PR, ZENITH’s tail convergence latency does not degrade as the network size increases. In a wide-area network traffic engineering scenario, ZENITH achieved 1.23x higher throughput compared to the PR baseline by avoiding inconsistencies during overlapping network updates. By preventing the root causes of many outages, ZENITH improves network availability and makes it more feasible to meet these demanding reliability goals at scale.

Q&A

Q1: What rules do you follow when reducing the system into subcomponents?

A1: Our goal was to design a controller that resembles today’s controllers. These controllers are structured as microservices, and within each microservice, there is some inherent parallelism.

Q2: Did you further look inside each microservice to reduce it into even smaller subcomponents?

A2: Let’s go back to the diagram, each microservice is represented by a red box. Each of these can be treated as an independent component. The first step is to decompose the system into microservices. The next step is to break down each microservice into its individual internal components.

Q3: Are there scalability limitations when handling complex specifications during verification?

A3: Yes. Formal model checking grows rapidly in complexity with larger topologies, requiring more hours to complete. To address this, general properties are proven and validated experimentally to ensure correctness at scale.

Q4: Is there a way to build a pipeline for incremental or continuous verification of new specifications?

A4: A pipeline is possible by combining proofs, model checking, and model generalization. This hybrid approach helps manage very complex specifications while balancing scalability and correctness.

Personal thoughts

This paper uses a fundamental “correct-by-construction” approach to solve a critical system problem. Instead of patching inconsistencies with a slow and unscalable mechanism (PR), it tackles the root cause by formally verifying the controller’s design. The three contributions are synergistic and practical: the verified core provides the foundation, independent app verification maintains development agility, and automatic code generation closes the gap between a correct design and a correct implementation. The use of a DAG as an abstraction for application intent is particularly elegant and is key to enabling the independent verification of applications. It is worth considering whether this framework can verify the performance attributes of the network in addition to verifying correctness.